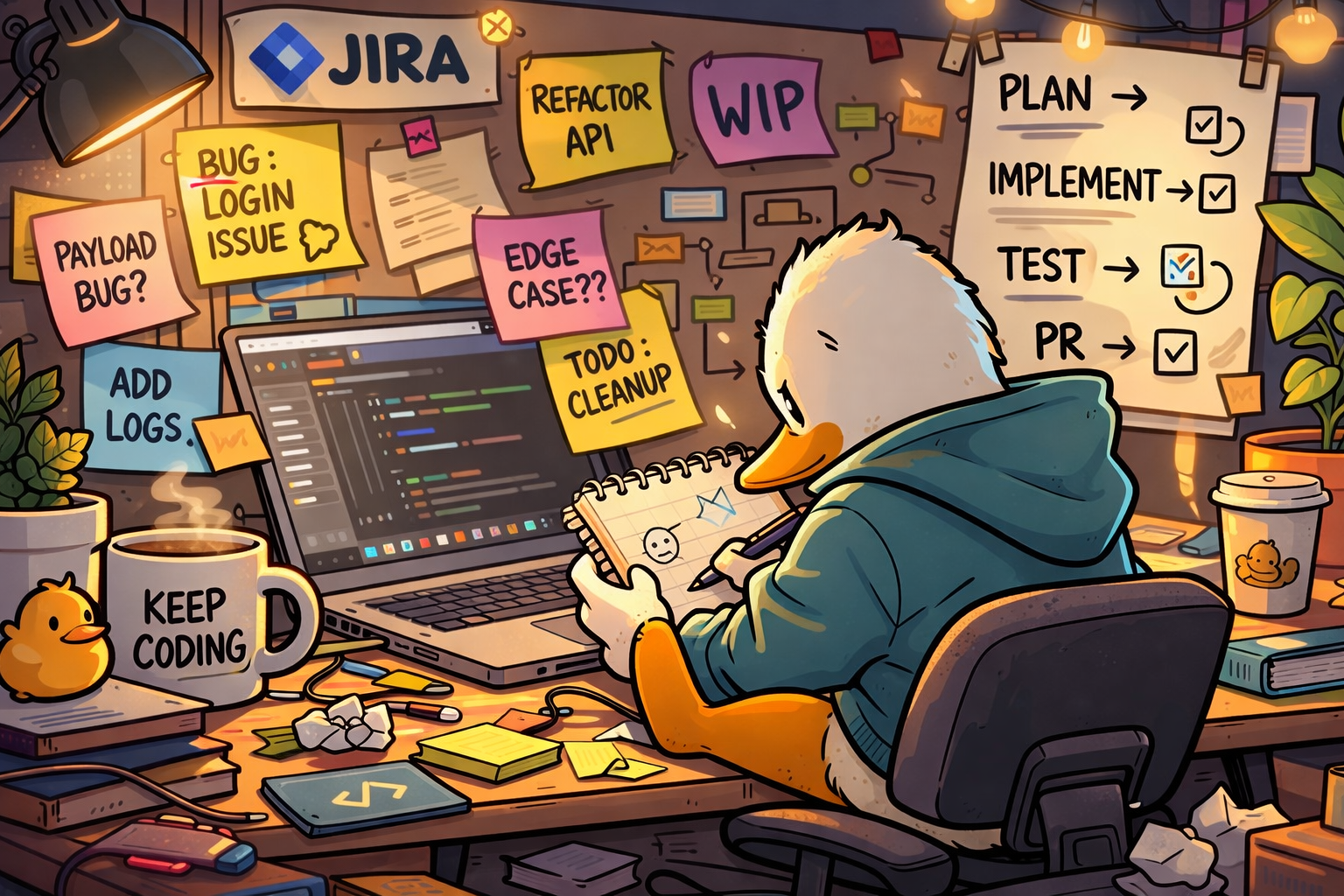

Tickets That Can Drive Themselves 🦆

I don’t have any big revelations today. This is more of a progress report on a workflow I’ve been slowly assembling.

The goal is fairly simple on paper. For smaller issues, the really well-groomed kind, I want to be able to assign a Jira ticket directly to one of my agents and have it move the task forward without much involvement from me.

The rough shape of the flow looks like this.

I assign the ticket to the agent. The agent reads the ticket, switches into a planning mode, and produces a plan for implementing the work. That plan gets written back into the Jira ticket itself, and the ticket moves to a status that effectively says “human review required.”

At that point I read the plan and either adjust it or accept it. If I move the ticket to the next state, that becomes the signal that the plan has been approved.

Once that happens the agent picks the ticket back up, re-reads the Jira issue, re-reads the plan it generated earlier, and begins implementing the work. From there it runs in a loop: implement something, run tests, adjust, repeat until the task is complete.

When it finishes, it opens a pull request and moves the ticket into whatever state corresponds to “ready for review.”

That’s the end state I’m working toward.

There are still a few architectural questions I haven’t settled yet. For example, it may make sense to have separate agents for grooming and implementation. One could focus on understanding and structuring the work while another focuses on writing code. I haven’t decided if that separation actually improves things or just adds ceremony.

The piece I’m still designing is the connector that ties all of this together.

I don’t want to use an LLM just to poll Jira for updates. That feels wasteful and fragile. What I want instead is a very small connector that reacts to webhooks. Jira fires the webhook when a ticket changes state, and the connector signals the appropriate agent to wake up and do the next thing.

So the connector becomes the traffic cop and the agents stay focused on the work.

Most of my recent effort has actually been inside the planning and implementation stages. That means building out the skills the agents rely on.

They now have explicit skills for reading and writing Jira tickets, interacting with Git, running tests, and navigating the codebase in ways that align with how I like things organized.

Testing has been an interesting constraint to think about. When an agent runs tests on its own, I have it ignore anything that touches external services. Anything that calls out to third-party systems gets mocked so the loop stays self-contained. The goal is fast iteration without accidentally touching production systems or external dependencies.

At this point I can hand an agent a well-defined Jira ticket and it can work its way to a completed feature. I still manually approve a couple of steps along the way, although I know those approvals could be removed if I wanted full autonomy.

For now I actually prefer keeping the checkpoints.

The pattern that keeps showing up is pretty simple. The clearer the task definition, the more effective the agent becomes. When the instructions are explicit and the available tools are well defined, the system behaves in a surprisingly predictable way.

Most of the work isn’t about prompting anymore. It’s about giving the agent a stable environment to operate in.

Clear task. Clear tools. Tight testing loop.

That combination seems to carry most of the weight.

Anyway, that’s today’s small report from the duck pond.

Comments